If you’ve ever wondered why your in-laws continue to believe the earth is flat and climate change is a hoax, scientists may have found the answer — and it has nothing to do with intelligence.

A group of developmental psychologists from the University of Rochester and UC Berkeley discovered that feedback trumps (no pun intended) facts and physical evidence when it comes to beliefs and decision making.

The study, published in mid-August in Open Mind, recruited 500 adults through Amazon’s Mechanical Turk gig marketplace and asked them to identify which colored shapes were a “Daxxy,” and how sure they were of their choice. Because the concept of a “Daxxy” was made up by researchers, the participants had no idea what, exactly, constituted one. As it turned out, however, their surety increased exponentially if recent guesses had proven correct.

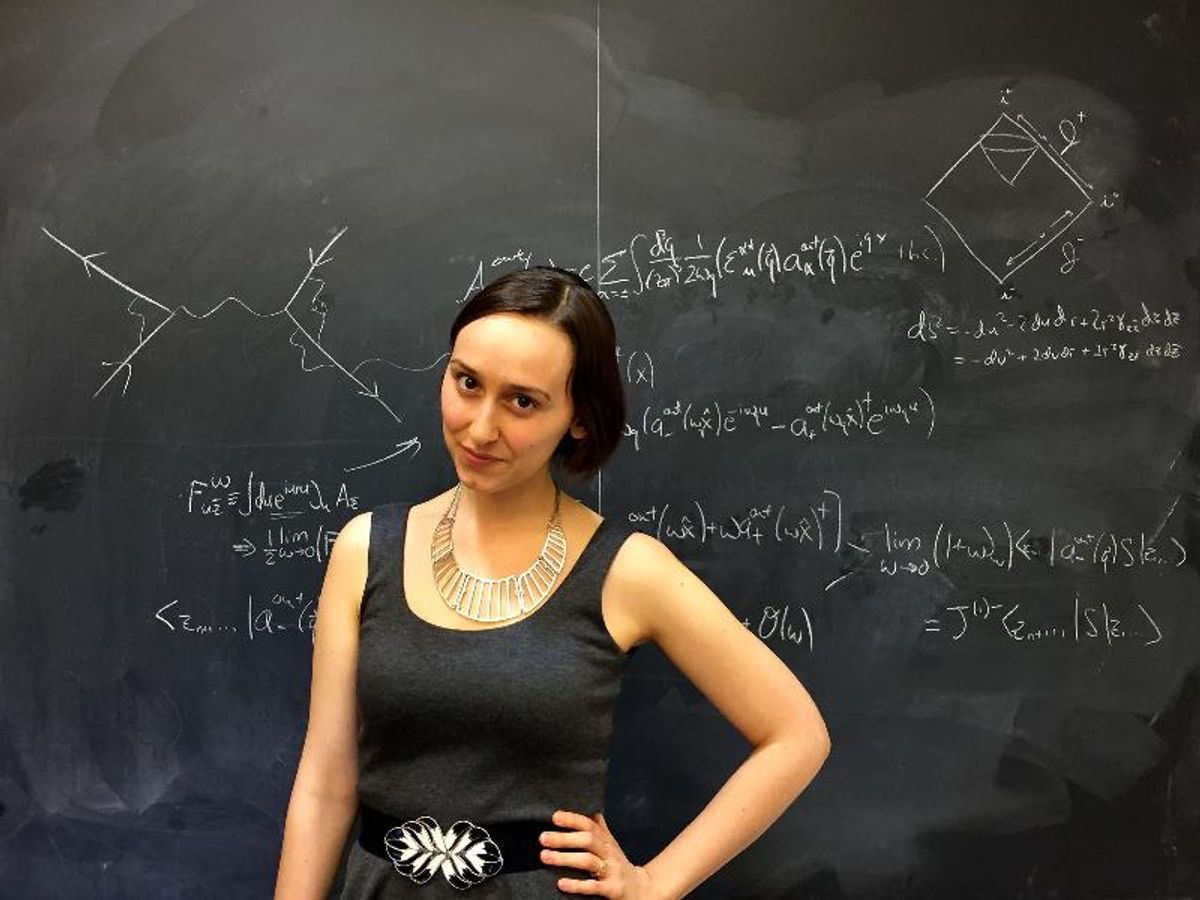

“If you use a crazy theory to make a correct prediction a couple of times, you can get stuck in that belief and may not be as interested in gathering more information,” said study senior author Celeste Kidd of UC Berkeley.

In other words, if the participants were told they were doing well, they tended to be 100 percent certain this was true and discontinued further investigation.

“What we found interesting is that they could get the first 19 guesses in a row wrong, but if they got the last five right, they felt very confident,” said lead study author Louis Marti in a release. “It’s not that they weren’t paying attention, they were learning what a Daxxy was, but they weren’t using most of what they learned to inform their certainty.”

Much like birthers and 9/11 conspiracy theorists bolstering each other’s views on Facebook and message boards, positive feedback that one’s views are correct could — and does — quash curiosity, which could lead a person to discover facts that contradict long-held beliefs.

"If you think you know a lot about something, even though you don't, you're less likely to be curious enough to explore the topic further, and will fail to learn how little you know," Marti said.

The recent study paints a more nuanced picture than previous research, which had indicated that those who believe in conspiracy theories like chemtrails, alien landings covered up by the government, or the fact the moon landing may have been faked have low self-esteem or narcissistic tendencies.

Even if that nuanced picture largely indicates that humans — and, by extension, their brains — are simply lazy.

"Decades of research have shown that humans are so-called cognitive misers,” said Keith E. Stanovich of the University of Toronto in Scientific American. “When we approach a problem, our natural default is to tap the least tiring cognitive process,” which intiutively seeks out social interaction and peer bonding. “But understanding scientific evidence, a more recent achievement, involves more complex, logical and difficult…processing.”

SECONDNEXUS

SECONDNEXUS percolately

percolately georgetakei

georgetakei comicsands

comicsands George's Reads

George's Reads