Elon Musk, of Telsa and SpaceX fame, is a man who recognizes no technological boundaries: his projects have included colonizing Mars, slowing climate change and revolutionizing transportation. But according to him, his most significant venture will be gaining international cooperation to ban a specific branch of artificial intelligence (AI), autonomous weapons—before it’s too late.

Open Letter to UN

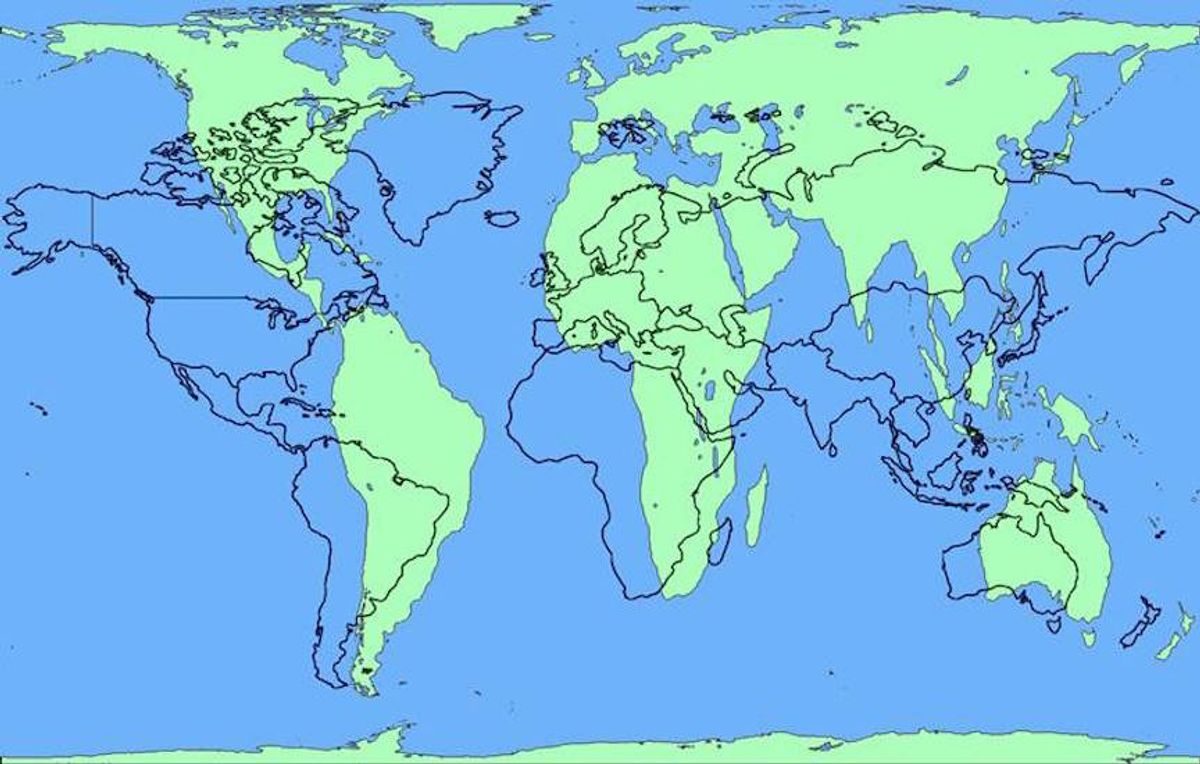

Musk and Mustafa Suleyman, of Google’s parent company Alphabet, led a group of 116 AI and robotics experts from 26 nations in an open letter (the “2017 letter”) calling for the ban of lethal autonomous weapons, often called killer robots. This is the first time leaders in the AI and robotics industries have joined forces on this issue with researchers such as Stephen Hawking, Apple co-founder Steve Wozniak, and Noam Chomsky.

At the International Joint Conference on Artificial Intelligence (IJCAI) in Melbourne, the writers presented the letter in advance of a review of the UN’s Convention on Certain Conventional Weapons (CCCW), which is considering adding robotic weapons to their list of restricted weapons. The CCCW and other corresponding treaties currently ban chemicals, mines and other forms of weaponry that create “unnecessary or unjustifiable suffering to combatants or to affect civilians indiscriminately.”

Pointing to these criteria, the letter explains how autonomous weapons can be used in ways envisioned by the definition in the CCCW: “These can be weapons of terror, weapons that despots and terrorists use against innocent populations, and weapons hacked to behave in undesirable ways.”

The experts’ letter also warns of a potential arms race in autonomous weapons, calling it a “third revolution in warfare,” following the development of guns and nuclear weapons.

Moreover, the writers state these so-called killer robots have the potential to escalate conflict beyond imagination. “Once developed, they will permit armed conflict to be fought at a scale greater than ever, and at timescales faster than humans can comprehend.” The letter adds, “We do not have long to act. Once this Pandora’s box is opened, it will be hard to close.”

As those most knowledgeable about the weapons’ capabilities, the writers of the letter felt obligated to act. "As companies building the technologies in Artificial Intelligence and Robotics that may be repurposed to develop autonomous weapons, we feel especially responsible in raising this alarm," they wrote.

The review of the UN’s CCCW was originally scheduled for August but was postponed until November for unrelated reasons.

Previous Warning Cries from Musk

The 2017 talks about autonomous weapons were brought on, in part, by a similar open letterpresented at the ICJIA conference in 2015. Elon Musk was one of the 1,000 tech experts and scientistswho signed the previous letter (the “2015 letter”) warning the conference about the dangers of autonomous weapons.

There’s an even deeper history of warning bells for autonomous technology. For instance, in 2012, the Pentagon announced that humans will forever remain involved in the final decision of what is detonated. Then, the following year, the United Nations Human Rights Council recommended a national ban on Lethal Autonomous Robots (LARs), which can choose and engage targets without human intervention.

Defining autonomous weaponry, the 2015 letter stated: “Autonomous weapons select and engage targets without human intervention.” Further, the writers provided examples: “They might include, for example, armed quadcopters that can search for and eliminate people meeting certain pre-defined criteria, but do not include cruise missiles or remotely piloted drones for which humans make all targeting decisions.”

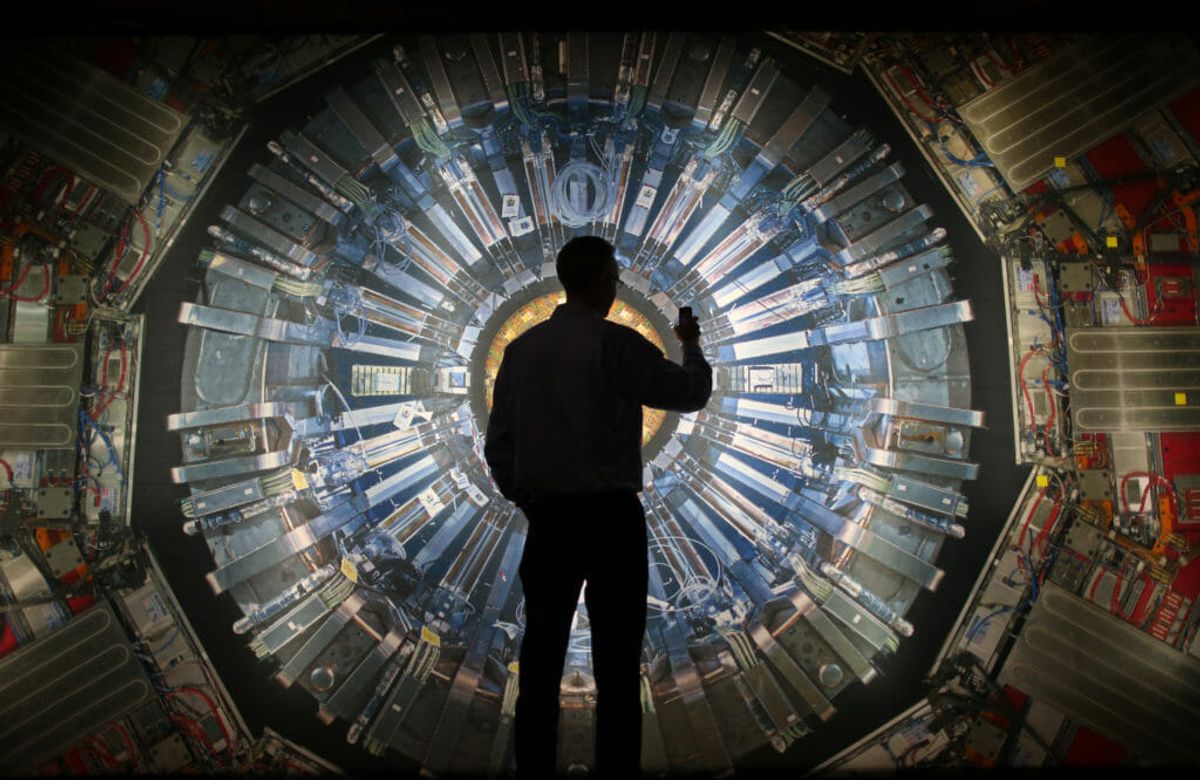

While some have dismissed autonomous weapons under this definition as years away, Musk continues to stress the need to be proactive in regulation of AI, calling it “a fundamental risk to the existence of human civilization.” To this end, he operates a non-profit known as Open AI, which seeks to advance ethical AI research.

On August 11, 2017, he tweeted: ‘If you're not concerned about AI safety, you should be. Vastly more risk than North Korea,” while the United States and North Korea engaged in threats of nuclear war.

Prior to that, at the U.S. National Governors Association meeting in July, Musk expressed his concerns more bluntly. “I have exposure to the very cutting-edge AI, and I think people should be really concerned about it,” he said.“I keep sounding the alarm bell, but until people see robots going down the street killing people, they don’t know how to react, because it seems so ethereal.”

Yet the 2015 letter explains the urgency of addressing the development of any type of killer robots. “The endpoint of this technological trajectory is obvious: autonomous weapons will become the Kalashnikovs of tomorrow." Musk and the other writers added, "The key question for humanity today is whether to start a global AI arms race or to prevent it from starting."

Should Autonomous Weapons Be Banned?

Researchers and tech experts outlined some of the primary arguments for and against the autonomous weapon ban in the 2015 letter. First, they state “that replacing human soldiers by machines is good by reducing casualties for the owner but bad by thereby lowering the threshold for going to battle.” Additionally, materials for production are inexpensive and readily available, but that also makes them more easily available to terrorists and dictators. The writers added, “Autonomous weapons are ideal for tasks such as assassinations, destabilizing nations, subduing populations and selectively killing a particular ethnic group.”

Based on these arguments, the writers “believe that a military AI arms race would not be beneficial for humanity. There are many ways in which AI can make battlefields safer for humans, especially civilians, without creating new tools for killing people.” They conclude that “[s]tarting a military AI arms race is a bad idea, and should be prevented by a ban on offensive autonomous weapons beyond meaningful human control.”

In support of the 2017 letter, of which he was a key organizer, Toby Walsh—professor of artificial intelligence at the University of New South Wales in Sydney—views the risks of autonomous weaponry through a similar lens as other technological developments.

“Nearly every technology can be used for good and bad, and artificial intelligence is no different. It can help tackle many of the pressing problems facing society today: inequality and poverty, the challenges posed by climate change and the ongoing global financial crisis,” Walsh said. “However, the same technology can also be used in autonomous weapons to industrialize war. We need to make decisions today choosing which of these futures we want.”

Stuart Russell, founder and Vice-President of Bayesian Logic, agrees the ban is imperative. “Unless people want to see new weapons of mass destruction – in the form of vast swarms of lethal microdrones – spreading around the world, it’s imperative to step up and support the United Nations’ efforts to create a treaty banning lethal autonomous weapons. This is vital for national and international security.”

Despite the number of experts leaning on government and political officials to regulate autonomous weapons, some actors continue to deny the urgency of this problem.

Ryan Gariepy, founder of Clearpath Robotics, said in a press statement: “This is not a hypothetical scenario, but a very real, very pressing concern which needs immediate action.”

Gariepy added, “We should not lose sight of the fact that, unlike other potential manifestations of AI which still remain in the realm of science fiction, autonomous weapons systems are on the cusp of development right now and have a very real potential to cause significant harm to innocent people along with global instability.”

Are We Too Late Already?

Ongoing delays in creating a workable ban against autonomous weapons give rise to concern that the risks of AI technology may already have developed past the point of regulation. The U.S., Russia, China, Israel and others are already developing lethal autonomous weaponry.

In fact, autonomous and semi-autonomous weapons already exist, although there is dispute as to whether any of the autonomous weapons are yet used in that manner. The Samsung SGR-A1 sentry gun serves as an example; it’s technically capable of firing autonomously and is used on the South Korean border of the 2.5m-wide Korean Demilitarized Zone. It has also the option to fire non-lethal shots autonomously, which is its purported current use.

The UK’s BAE Taranis drone is an unmanned combat aerial vehicle, designed to replace certain human-piloted warplanes sometime after 2030. The U.S., Russia and others are developing robotic tanks, which either operate autonomously or via remote control. The U.S. Navy also is creating autonomous warships and submarine systems.

Even though it may be a while before these killer robots reach their full maturation through testing and refinement, they already populate the water, earth and skies without regulation for their production or use. Without such international laws, currently there’s little incentive for nations to slow development of autonomous weaponry when other competitive countries are producing them.

A US department of defense report stronglyencourages increased investment in autonomous weapon technology, so that the U.S. can “remain ahead of adversaries who also will exploit its operational benefits.”

It’s starting to sound like we’re already off to the races.

SECONDNEXUS

SECONDNEXUS percolately

percolately georgetakei

georgetakei comicsands

comicsands George's Reads

George's Reads