Apple’s recent refusal to assist the FBI in unlocking the iPhone used by one of the San Bernardino shooters has created a spirited debate over individual privacy versus safety. While iPhone users, and the greater public, are staying tuned to hear whether Apple will be successful in its fight to block access to the phone, the real battle will be over how secure Apple can make its phones going forward.

Apple’s Refusal to Create a “Backdoor” in the Face of a Judicial Order

The current conversation is around what role, if any, Apple should play in helping the government unlock a password-protected iPhone. The phone in question belonged to Syed Rizwan Farook, who, along with his wife Tashfeen Malik, killed 14 of Farook’s coworkers and injured 22 others during a holiday gathering. Farook and Malik were both killed by police in the hours following the attack.

In the months following, conversations between lawyers for the government and Apple about the extent to which Apple would assist in assessing the phone’s data reached an impasse. When the talks collapsed, upon the motion of the Department of Justice, Judge Sheri Pym of the U.S. District Court in Los Angeles ordered that Apple provide “reasonable technical assistance” to investigators attempting to unlock Farook’s phone.

In a highly-publicized move, Apple refused, citing to privacy and security issues. Its widely-anticipated motion to vacate was filed [Thursday], asking the court to drop its demand.

However, Apple was never completely unwilling to help the government with accessing user data. Up until last fall, Apple routinely assisted the Justice

Department when receiving requests to help open a locked phone.

Even in the San Bernardino case, Apple touted its willingness to support the government. In Chief Executive Tim Cook’s “customer letter” he wrote “When the FBI has requested data that’s in our possession, we have provided it. Apple complies with valid subpoenas and search warrants, as we have in the San Bernardino case. We have also made Apple engineers available to advise the FBI, and we’ve offered our best ideas on a number of investigative options at their disposal.”

Apple’s issue with the government’s request in this case is that it is asking it to build something new – a “backdoor” to the iPhone. This, Cook worried, would inevitably fall into the hands of hackers and be misused. Many other tech and social media giants – like Facebook, Twitter and Google – agreed, publicly supporting Apple’s position.

The iPhone’s Increased Security

Since the iPhone was first introduced, in 2007, Apple has made advancements to make the phone more secure. While that has helped protect Apple’s customers’ data, it has also made it more difficult for law enforcement to access that data when needed.

The biggest change came with a September 2014 update to Apple’s iOS software, which encrypted all iPhone user data by default. Prior to this update, law enforcement could extract data from a locked phone even without a password.

Apple followed up last year with a new hardware security feature— a processing chip devoted to securing data called “the secure enclave.” Everything the secure enclave does is encrypted, which should prevent hackers from exploiting vulnerabilities that

might befound in other parts of the phone or the software.

Is Apple Capable of Creating a “Backdoor” into Farook’s Phone?

Farook’s phone was an older model – a 5C, which did not have the secure enclave. However, his phone was running the automatic encryption software. This software would erase the contents of Farook’s phone after ten incorrect password guesses.

The California judge’s order would require Apple to provide “reasonable technical assistance to assist law enforcement” obtain access to the data on Farook’s phone. In particular, the order requires that Apple give assistance by creating software that would bypass or disable the auto-erase function. It also requires that Apple make it easier for the FBI to guess the password by erasing the five-second delay between password entries, and allowing agents to send electronic passwords to the phone rather than manually typing them in.

While this software does not yet exist, there is no real dispute that Apple could create it. Apple’s objection to creating the software is not the lack of feasibility, but rather the potential that the software could fall into the wrong hands.

Even with the new operating systems that have the secure enclave, it appears Apple could create a workaround that would disable the auto-erase function—although it would be a more difficult task. The secure enclave has a reprogrammable component that apparently can be reset by Apple, which would create the means to access the data.

Can Apple Lock Itself Out of Future Phones?

While current phones appear to be crackable, Apple could create a new phone that even it could not create a backdoor to. To do this, Apple would need to alter

the secure enclave by blocking changes to its software unless a password is entered. Alternatively (or additionally), it could modify the chip so that it would erase all the phone’s data if its software were tampered with.

“I bet Apple will move toward making the most sensitive parts of [Apple’s software] stack updatable only in very specific conditions: wipe user data, or keep user data only if the phone is successfully unlocked first,” said Ben Adida, security expert and lead engineer at Clever.

Apple certainly has the technical ability to do just that—but whether it has the legal ability to do so is less clear.

As Robert Cattanach, a partner at the law firm Dorsey & Whitney, said “The real question is whether the government can leverage whatever precedent is created—including the possibility that the court does not force Apple to comply—as a basis to convince Congress to pass legislation that would require tech companies to provide them with a true backdoor to defeat encryption going forward.”

Already two states—New York and California—are looking into legislation that would require Apple and other phone makers to do just that.

At the federal level, legislation requiring that technology companies provide unencrypted data to the government has been in the works for years. Lawyers at the FBI, Justice Department and Commerce Department have drafted bills incorporating the idea

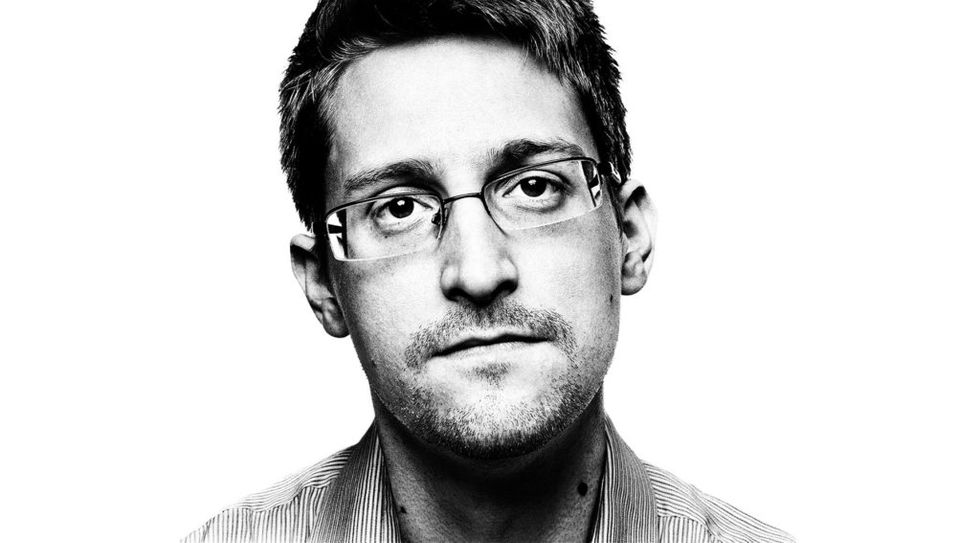

that technology companies should be bound by the same rules as phone companies, which can be required to build digital networks that government agents can tap. That legislation was shelved in 2013, when Edward Snowden exposed the extent of government surveillance operations and the debate about privacy in the United States heated up.

This case could respark the debate and prompt federal legislation. Many politicians—Republicans and Democrats alike—have criticized Apple’s stance. Recently, Representative Adam Schiff, the top Democrat on the House of Representatives’ Intelligence Committee, said that the “complex issue” raised by the Apple case, and the prevalence of strongly encrypted devices more generally, “will ultimately need to be resolved by Congress, the administration and industry, rather than the courts alone.”

Digital rights activists are also predicting the introduction of a federal law, seeing the Apple case as a means of accelerating the process. Said Cindy Cohn, executive director of the Electronic Frontier Foundation, a digital rights group, “It’s a win-win for [the government]. If they prevail in court, they don’t need a law. And if they lose, they have it set up to go to lawmakers and say: This is what we need, the courts didn’t give it to us, so we need a new law.”

What that legislation will look like—and whether it can be passed—remains to be seen, leaving phone makers free to create phones as secure as they want to. For now.

SECONDNEXUS

SECONDNEXUS percolately

percolately georgetakei

georgetakei comicsands

comicsands George's Reads

George's Reads